Edge Computing with Fastly CDN and Varnish VCL for Authenticated Requests

This article provides a brief explanation of how we were able to utilize a CDN with dynamic authenticated requests for static files while still offloading the file transfers from the origin to the CDN edge.

What is Fastly or Varnish?

Here at Endertech we’ve had our experiences with Varnish and writing custom VCL since the early 3.x days. We think it’s awesome that Varnish has a company like Fastly that’s bringing it to the CDN edge and helping usher along the expansion of “edge computing”. You’ve probably heard of Fastly or Varnish but what makes it more special than the typical CDN?

Varnish is a high performance caching engine. Typically a cache, such as a standard CDN, will utilize control panel settings or HTTP headers to control the caching logic. This keeps it simple for CDN users to get going, but if they need more control they’ll have to resort to custom programming. This is where Varnish and it’s programming language VCL come in to play. It allows custom control over how the caching engine works. Some CDNs do offer custom programming, but they’re rather expensive and their team needs to do the programming for it.

Fastly offers an alternative that lets you run your own VCL on their CDN edge servers at a more affordable price. We’ve been wanting to utilize Fastly for a while now but there weren’t many scenarios that called for it when a regular CDN or running our own Varnish instance would suffice. We try to use the most appropriate tool for the job, and minimizing complexity is a big factor. HTTP headers and the CDN’s various options do give quite a bit of control, but we did have a recent scenario to which HTTP or the CDN’s options wasn’t sufficient or ideal.

How does it help solve the problem?

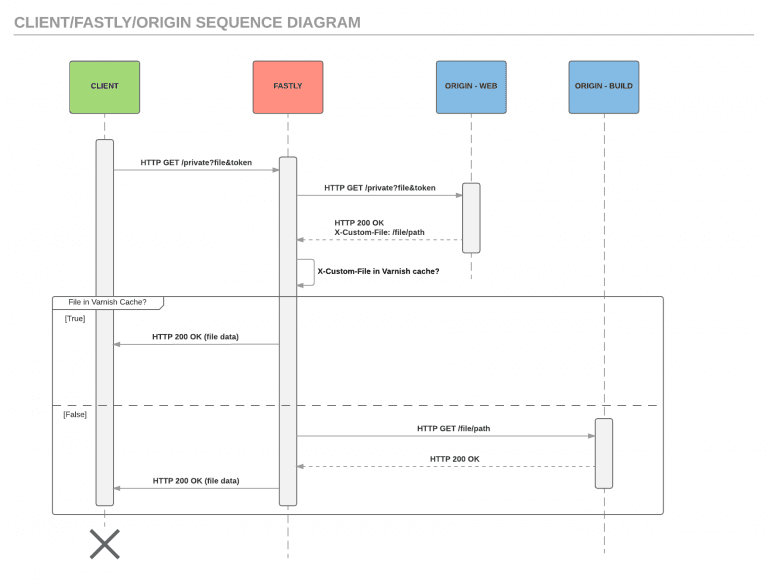

In this particular case there was a big update that was going to be deployed to many thousands of clients. The update was calculated to potentially utilize much more bandwidth than was available in the hosting environment and time was short to make any big changes to that environment. For this scenario we drew up a sequence diagram to help visualize this flow:

The interaction between the client, Fastly, and the origin.

The client makes a request for each file and supplies a token that is verified by the origin. This token basically proves that they’ve purchased the file they’re accessing. Varnish will pass this authenticated request through without caching it. If the response from the origin is a 200 OK, meaning the token was valid, it’ll also return a custom header containing the path to the file. Varnish will use that file path to check if the file is in its cache. If not then it’ll make another request to the other backend server to retrieve the file, cache it, then serve it to the client.

Since the file is being cached, future requests for that same file will still be authenticated like normal but will then use the cached file and not request it from the backend until the object’s cache expires. This also applies to different clients with different tokens requesting the same file. It is also possible to cache the authentication request for a shorter time than the file, such as a minute. This can further help offload computing and traffic from the origin.

The benefit of this setup is offloading the file retrieval from the origin server. This helps keep the origin server’s network from being saturated because the CDN cache is on-demand. Also, because the backend is different from the origin, it can be a cluster of servers, or even a cloud object storage provider such as AWS S3 or Google Cloud Storage and transparent to the end-client making the requests.

Are there other ways to solve this?

This isn’t the only way to solve this particular challenge though. There was also the possibility of using “Signed URLs” which allow for time-limited retrieval of files from a cloud provider like AWS S3 or Google Cloud Storage. This helps protect the original files so that only authenticated requests can retrieve them. The reasons we didn’t want to go this route is because there was limited time to modify and fully test the file retrieval scripts along with us having more experience with Varnish and VCL. We’re also looking to try out section.io when the opportunity allows, possibly in conjunction with Fastly.

We remain excited for edge computing possibilities like Fastly to keep gathering traction along with the internet continually evolving to being more decentralized.

________________________ Have a similar web development business challenge of needing a CDN with more capability or looking to move some tasks to the edge network? Contact us to help you implement the most effective solution.